During all the OpenSIPS trainings, one of the first questions that pops up when talking about configuring OpenSIPS is : “How do I know how many processes should I configure on my OpenSIPS?“.

During all the OpenSIPS trainings, one of the first questions that pops up when talking about configuring OpenSIPS is : “How do I know how many processes should I configure on my OpenSIPS?“.

And later, this question escalates into the one of the most troubling question for people operating OpenSIPS : “Does my OpenSIPS have enough processes to support my traffic?“.

There are no simple answers to these questions as the needed number of processes depends on the amount of traffic, on the complexity of your routing logic, on the ratio between I/Os and CPU oriented ops and many others. Not to mention that you never receive a constant amount of traffic.

Besides having enough processing power (as processes), this issue also links to another topic: a wise usage of your server resources. In the cloud and virtualization ages, the server resources like CPU or memory are things you dynamically pay for (pay as you use). Therefore, setting up 100 processes (just to be on the safe side) may cost me money considering the extra resources needed to handle those processes, even if they are idle.

Bottom line, under-estimating the number of processes may translate into “not being able to cope with the traffic” and over-estimating it may translate into “poor resource management costs me money”.

So, what’s the solution?

Auto scaling

OpenSIPS 3.0 is the devops engineer best friend – say “good bye” to static number of processes and “welcome” to the auto scaling.

The auto-scaling support translates into OpenSIPS’s automatic ability to fork or terminate processes, depending on the internal load of OpenSIPS. A higher internal load will lead to forking (on demand) new processes, while a low internal load will trigger the termination of some processes.

The entire process of auto-scaling is governed by scaling rules – load thresholds, monitoring cycles and lower/upper limits – all these will be used by OpenSIPS to automatically decide during run-time if more or less processes are needed.

Everything is done is a consistent way in terms of handling traffic, the asynchronous operations or other internal resources – when a process is to be terminated, OpenSIPS will first drain and flush it, to be sure that nothing is lost.

So you don’t have to worry about “guessing” the right number, about “too much of a traffic” or about “wasting resources”.

Just “SIP back and relax” by using OpenSIPS.

Configuring the auto scaling

OpenSIPS allows you to control the number of processing for certain groups of processes:

- TCP worker processes – a single group for all the TCP processes (all interfaces)

- UDP worker processes – each UDP interface has its own group of processes

- timer processes – a single group of extra timer processes

Each group may individually auto-scale its number of processing strictly depending on the load of the processes from the group – they do not influence one each other – for example a high load in UDP interface A will result in forking additional processes in the group serving the UDP interface A, without changing the number of processes for other UDP interfaces, for TCP or timer groups.

For each group, the auto-scaling is control via an auto_scaling_profile :

auto_scaling_profile = PROFILE_A

scale up to 6 on 70% for 4 cycles within 5

scale down to 2 on 18% for 10 cycles

This profile will allow the group to fork up to 6 processes. A new process will be forked when the overall load of the group will be higher than 70% for more than 4 cycles during a 5 cycles monitoring window. A cycle is a time unit used for monitoring (like 2 seconds).

Also the profile will allow the group to scale down to a minimum of 2 processes. A process will be terminated when the overall load of the group will be lower than 20% during 10 cycles. The down scaling part of the profile is optional. If not defined, OpenSIPS will never down scale, but only up scale.

Once you defined your auto-scaling profiles (yes, you can define more than one), you will simple assigned them to certain groups of processes :

tcp_workers= 4 use_auto_scaling_profile PROFILE_SIP timer_workers = 2 use_auto_scaling_profile PROFILE_TIMER listen=udp:127.0.0.1:5060 use_auto_scaling_profile PROFILE_SIP

How does it work?

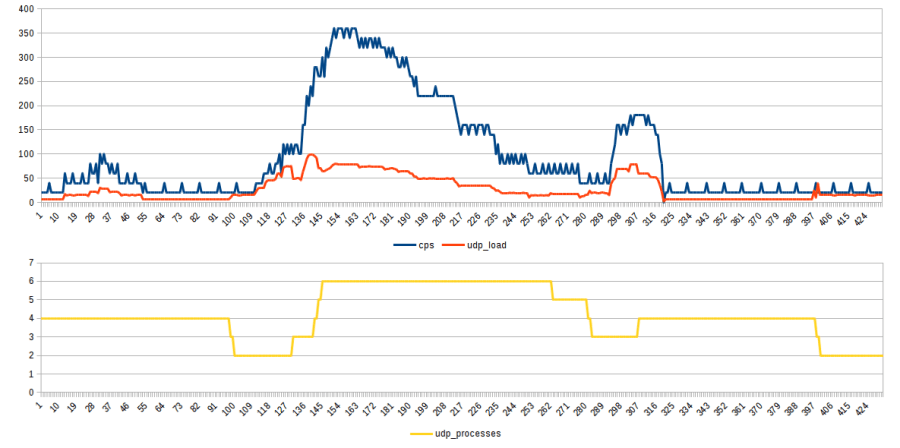

Let’s see how the auto-scaling engine reacts, considering the above profile for an UDP interface where we send some variable traffic (as CPS) with SIPP (click to enlarge):

Before explaining how it works, let me point out the most relevant aspect of this chart – even if the CPS dramatically increased, we note that the internal OpenSIPS load is contained by creating additional processes – see the high difference between the blue (CPS) and red (load) around seconds 130 – 240 , which corresponds to a higher number of processes to handle the traffic.

Shortly said , doesn’t matter how tough the job is, we can do it by bring in more friends :).

Now let’s see what actually happened there:

- we start with preset value of 4 UDP processes and hit them with ~10 CPS. The resulting load (over all the processes related to this interface) is ~7%

- around seconds 20-60 the CPS increases (max to 100CPS), but not enough to generate more then 25% internal load, so nothing happens.

- around second 100, as the load is below 18% (it is 7%), OpenSIPS downscales in cascade to 2 processes. Note that the internal load increases a bit as the same about of traffic (CPSs) is distributed over a smaller number of processes now. Also note that the downscaling didn’t occurred sooner due a protection mechanism (to prevent flip-flops with forking/terminating processes) – no process is terminated in a certain time window after startup or after a fork.

- starting with second 120, there is an increase of traffic (CPS) that increases the internal load over the 70% threshold. This results in the forking of 3 more processes for handling the traffic. As a result, even if the CPS boosted from 10 to 350, the load was kept ~70%, thanks to the additional number of processes.

- around second 270, as the load decreased below 18% and because the time window from the last fork passed, OpenSIPS will gradually terminate 3 processes. It is interesting to note that each time a process is terminated, the internal load increases a bit (same traffic, less processes), but without exceeding any upper thresholds (OpenSIPS takes care of this by pre-evaluating the resulting load after a downscaling)

- around second 300 a small spike in traffic triggers the fork of a new process. After that, the traffic CPS goes low to 10.

- as a result of the low traffic, after the time window (from the last fork), OpenSIPS will aggressively terminate processes until reaching the lower limit of 2 (as per profile)

Conclusion

The auto-scaling is a smart and easy to use feature that simply absolve you from any worries or concerns about proper scaling of your OpenSIPS – less worries, less work, more resource for you?

How can you not love OpenSIPS 3.0?

More reasons? Join us in Amsterdam for the OpenSIPS Summit and learn more about OpenSIPS 3.0!

3.0 feature set looks more promising..can’t wait to hear about config reload without restarts :). nice work guys. Thank you.

LikeLike